Now Reading: Brain-Inspired Chip Could Make AI 2,000 Times More Energy Efficient, and It Matters for Crypto

-

01

Brain-Inspired Chip Could Make AI 2,000 Times More Energy Efficient, and It Matters for Crypto

Brain-Inspired Chip Could Make AI 2,000 Times More Energy Efficient, and It Matters for Crypto

- Researchers at Loughborough University have demonstrated a brain-inspired neuromorphic chip that achieves up to 2,000x lower energy consumption than standard software-based AI methods for time-series prediction tasks, by processing data directly in hardware using nanoporous niobium oxide memristors.

- The von Neumann bottleneck, the structural inefficiency of conventional AI chips that wastes up to 80% of processor power moving data between memory and compute units, is the problem the chip solves, with direct implications for any AI workload involving sensor data, financial signals, or biological monitoring.

- DePIN protocols, including Bittensor (TAO), Render (RENDER), Akash (AKT), and Fetch.ai (FET), stand to benefit structurally if neuromorphic hardware reaches commercial production, as lower energy-per-inference costs reduce node operating expenses and expand viable hardware participation across higher-cost electricity markets globally.

A chip that processes information the way the human brain does and runs at a fraction of the power cost of conventional hardware has just demonstrated 2,000 times better energy efficiency than today’s software-based AI systems, but it is still at an early research stage.

Therefore, the question for the crypto and decentralized AI sector is not whether this technology arrives, but how fast it does, and who benefits when it does.

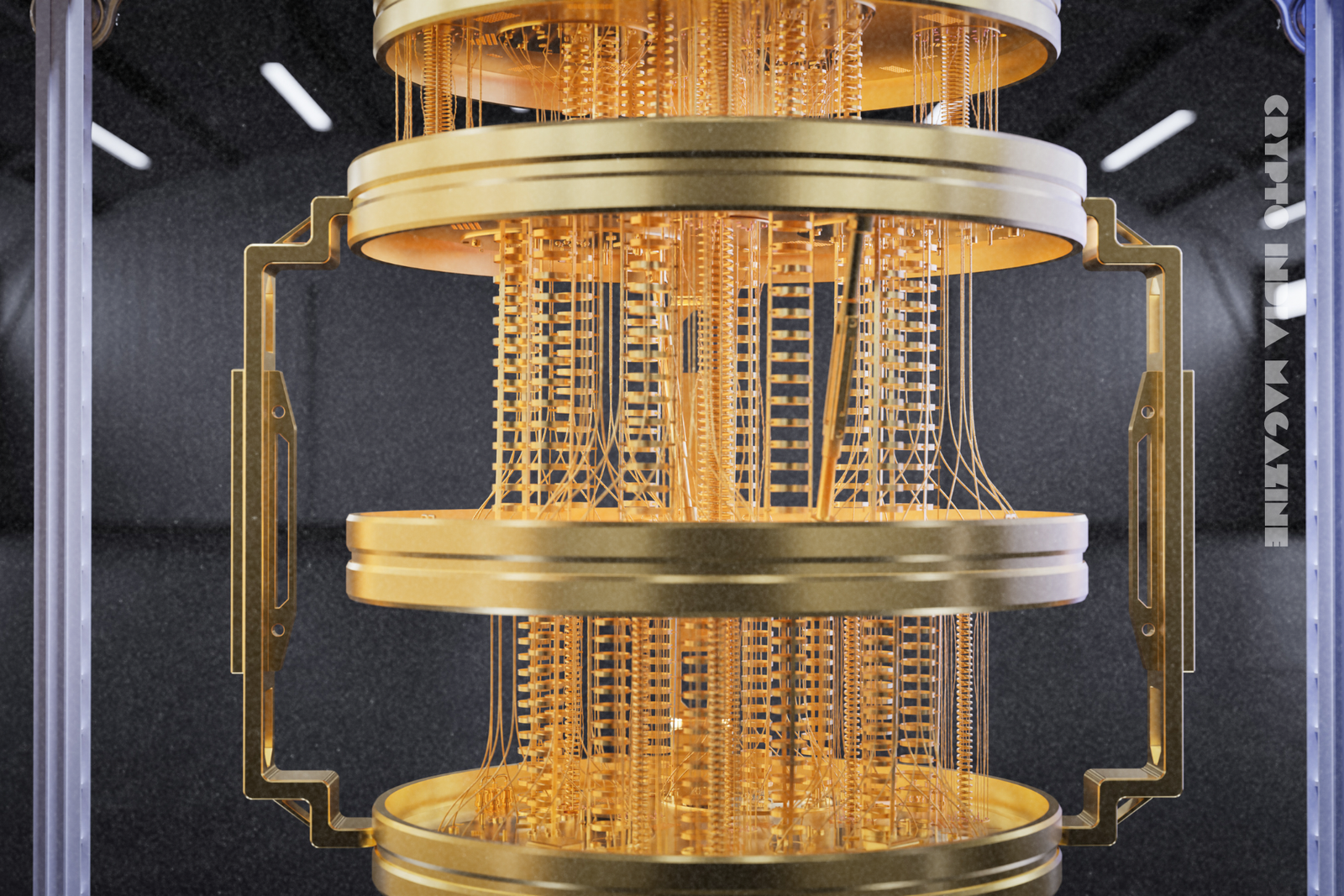

A neuromorphic chip is a computing device that mimics the structure and operation of biological neural networks to process information more efficiently than conventional processors. Researchers at Loughborough University in the United Kingdom have published a study in Advanced Intelligent Systems demonstrating a neuromorphic chip that processes time-dependent data directly in hardware rather than relying on external software. It has achieved up to 2,000 times lower energy consumption on specific tasks compared to standard software-based methods.

What the Loughborough Chip Actually Does

The chip solves a specific and expensive problem: processing data that changes over time, time-series data, without constantly shuttling information between separate memory and processing units.

Conventional AI hardware follows the von Neumann architecture, a computing design model in which memory and processing are physically separate components. Every AI task requires data to travel back and forth between the two units repeatedly, a structural inefficiency known as the von Neumann bottleneck, which wastes up to 80% of the processor’s power on data movement rather than computation, according to R. Thompson’s 2025 analysis in Data Science Collective.

The Loughborough device eliminates this bottleneck for time-series tasks by moving the computation into the material itself. The chip is a type of memristor, an electronic component that can store information about past inputs and alter its response to new signals based on that history. The memristor is fabricated from nanoporous niobium oxide, a material in which random nanopores create multiple electrical pathways. These pathways act as the hidden processing layer of a neural network, meaning the physical structure of the chip performs a computation that conventional systems would run in software.

“Inspired by the way the human brain forms very numerous and seemingly random neuronal connections between all its neurons, we created complex, random, physical connections in an artificial neural network by designing pores in nanometre-thin films of niobium oxide as part of a novel electronic device,” said Dr. Pavel Borisov, Senior Lecturer in Physics at Loughborough University and lead author of the study.

The technique the chip implements is called reservoir computing. It is a computational framework (a method for processing temporal data) that projects incoming data into a high-dimensional space to make patterns easier to detect and predict. Reservoir computing is used in AI to handle chaotic systems: weather modelling, financial time-series analysis, biological signal monitoring, and any data stream sensitive to small changes.

The 2,000x Number and What It Is Actually Measuring

The 2,000-fold efficiency gain applies specifically to time-series prediction tasks, not to general-purpose AI workloads such as large language model (LLM) inference or image generation. The study tested the chip on 3 task categories: predicting the short-term behaviour of the Lorenz-63 system, recognizing simple pixelated images of numbers, and performing basic logic operations.

The Lorenz-63 system is a well-known mathematical model of chaotic dynamics linked to the butterfly effect, where small initial differences produce dramatically different outcomes — the benchmark commonly used to evaluate reservoir computing performance.

In those tests, the chip processed chaotic time-series data and fed its output into a lightweight linear computer model, together producing accurate short-term predictions at roughly 2,000 times lower energy cost than an equivalent software-based solution running on a conventional processor, according to Dr. Borisov’s team in the paper published in Advanced Intelligent Systems in March 2026.

Professor Sergey Saveliev, an expert in theoretical physics and co-author of the study, described the design philosophy directly.

“This is a great example of how fundamental physics can contribute to modern computations, avoiding huge computational overheads by using the complexity of physical systems as a high dimensional filter for data,” according to the Loughborough University press release.

The 2,000x figure is a ceiling measured under controlled lab conditions. The research team is explicit about the current limitations; tests were conducted on relatively simple tasks, and the system has not yet been evaluated against noisier, real-world data streams. “The next steps are to increase the complexity of the neural networks and to conduct tests with input data that include much more signal noise,” Dr. Borisov said. The team characterises the approach as “scalable and practical” but acknowledges meaningful engineering work remains before commercial deployment.

Why AI’s Energy Problem Is a Crypto Problem

The energy consumed by AI infrastructure and cryptocurrency mining combined is forecast to reach between 620 and 1,050 terawatt-hours (TWh) annually by 2026, up from 460 TWh in 2022. This figure is roughly equivalent to Japan’s total electricity consumption, according to International Energy Agency (IEA) projections. A terawatt-hour is one trillion watt-hours, a standard unit of large-scale energy measurement.

The IEA projects that data center electricity consumption will grow to 945 TWh per year by 2030, from 415 TWh in 2024. The US Energy Information Administration (EIA) separately projects US commercial electricity sector consumption will grow by 5% in 2026, with data centers cited as the primary driver. Research from Carnegie Mellon University estimates that data centers and cryptocurrency mining could increase average US electricity bills by 8% by 2030, exceeding 25% in the highest-demand markets.

This energy constraint is structural, not cyclical. As AI models grow in parameter count and adoption broadens, energy demand compounds. The Loughborough chip is one of the first peer-reviewed demonstrations of hardware that addresses this constraint at the architectural level, not by optimising software efficiency or switching energy sources, but by redesigning where computation happens.

The Direct Line To DePIN And Decentralized Compute

DePIN (Decentralised Physical Infrastructure Networks) is a blockchain-coordinated model for building and operating physical infrastructure, including AI compute networks, through tokenised incentives distributed to hardware contributors rather than centralized operators. The DePIN sector held a combined market capitalisation of approximately $19.2 billion as of September 2025, up from $5.2 billion a year earlier, a 270% year-on-year increase. The sector now includes approximately 250 active projects spanning compute, wireless, storage, and environmental data networks.

The energy efficiency of the hardware nodes that contribute to DePIN networks directly affects the economics of participation. Higher energy costs reduce node operator margins and compress token incentive structures. A chip class that cuts energy consumption by orders of magnitude for the subset of AI tasks most relevant to edge deployment, sensor data, time-series monitoring, and autonomous agent inference, changes the cost-of-participation calculation for every hardware contributor in the network.

The most directly affected protocols are those running time-series-heavy workloads on distributed hardware. 4 categories stand out.

- Bittensor (TAO) is a decentralised AI network (a protocol that coordinates competitive machine learning model development through token incentives where subnets focused on financial time-series prediction and real-time data feeds would directly benefit from lower inference energy costs.

- Render (RENDER) is a decentralised GPU rendering and AI inference marketplace where energy cost per task is a core determinant of operator profitability.

- Akash Network (AKT) is a decentralised cloud compute marketplace where more efficient edge hardware expands the viable participant base beyond high-end GPU owners.

- Fetch.ai (FET) is an autonomous AI agent network where time-dependent signal processing is a primary use case for deployed agents monitoring on-chain conditions and sensor data.

The human brain runs all its cognitive operations on approximately 20 watts, comparable to two standard LED bulbs, according to PNAS research from October 2025. AI data centers currently consume millions of times more energy to perform less efficient versions of similar tasks. The neuromorphic approach does not replicate the full brain, but it does demonstrate that specific categories of AI computation can be brought dramatically closer to biological efficiency levels.

The Gap Between the Lab and The Network

The chip is research-grade hardware as of April 2026; it is effective at simple benchmark tasks but not yet validated for the complex, noisy, high-dimensional data streams that real-world DePIN nodes process. The research team identifies 3 requirements for the technology to reach practical deployment: larger neural network complexity within the chip architecture, performance validation against real-world noisy input data, and demonstration of the manufacturing consistency required for industry-compatible production.

The funding source is the Engineering and Physical Sciences Research Council (EPSRC), the primary UK government body for funding physical sciences and engineering research, not a private sector or commercial partner. The path from EPSRC-funded lab proof-of-concept to a chip inside a DePIN node typically spans 5 to 10 years of engineering development, fabrication scale-up, and integration work. Intel’s Loihi 2 neuromorphic chip, which simulates over 1 billion neurons, was released in September 2021 and remains primarily used in research contexts as of 2026, according to PNAS.

IBM’s NorthPole neuromorphic chip, released in October 2023, demonstrated comparable energy savings on certain AI tasks, according to IBM’s chief scientist for brain-inspired computing, Dharmendra Modha. Neither has yet displaced GPU-based infrastructure in commercial AI or DePIN deployment. The Loughborough result adds a third confirmed proof-of-concept, strengthening the case that the efficiency gains are reproducible, but the commercialisation timeline remains in the range of years, not months.

What 2,000x Efficiency Means when It Arrives at Scale

If neuromorphic chips achieve even a fraction of this efficiency gain in production, say 100x rather than 2,000x, the implications for decentralized AI infrastructure are structural, not marginal. Lower energy per computation means lower operating costs per node, wider participation in DePIN networks from regions with higher electricity prices, and smaller hardware form factors viable for edge deployment, including wearables, autonomous vehicles, and IoT sensors, the categories Dr. Borisov cited directly in his comments to Decrypt.

“My end goal would be for this kind of technology to be used in a time-dependent signal. Whether that’s in a car, a robot, a nuclear power plant, or in a smart watch,” Dr. Borisov told Decrypt. “For example, to monitor if someone has a stroke or not, to monitor the health of a car engine, or that the nuclear reactor is operating normally.”

Every one of those use cases, health monitoring, industrial sensing, vehicle systems, generates time-series data that decentralised AI networks are being built to process and act on. The DePIN infrastructure being assembled now, through Bittensor, Render, Akash, Fetch.ai, and others, is building the coordination and incentive layer. The neuromorphic hardware being validated in labs now is building the energy-efficient execution layer. When those two development arcs converge, the economics of decentralised AI change materially.

The research team believes it can get there.

“We believe this is a scalable and practical approach to creating small, industry-compatible devices for AI applications with much better energy efficiency and offline capabilities,” Dr. Borisov said in the Loughborough University press release.

Track This Research Before It Reshapes Decentralized Compute

A neuromorphic chip built from nanoporous niobium oxide has demonstrated 2,000 times lower energy consumption than software-based AI methods for time-series tasks. The chip processes information in hardware using physical properties of materials, eliminating the von Neumann bottleneck that wastes the majority of conventional processor energy. The research was published in Advanced Intelligent Systems by a team at Loughborough University, funded by the EPSRC, and is currently at the proof-of-concept stage.

3 things to monitor as this technology matures.

First, whether major neuromorphic chip developers, such as Intel (Loihi), IBM (NorthPole), or emerging startups, license or build on the nanoporous oxide memristor approach for production hardware. Second, whether DePIN protocols like Bittensor or Akash begin specifying energy-per-inference metrics in their node hardware requirements, signalling that efficiency has become a competitive variable. And third, whether the Loughborough team secures commercial or institutional co-funding for the next development phase, the shift from EPSRC grants to industry partnerships is typically the signal that a chip architecture has cleared the lab-to-market threshold.